Chapter 1 : AI Fundamentals for Open Banking : A Field Guide for Executives

Before you build a strategy around AI, you need to know what it actually is and what it isn't

Senior executives in banking and fintech have spent the better part of a decade playing around open banking (APIs, consent management, developer ecosystem, third-party provider networks, and real-time data flows ). Now the conversation has shifted. Every strategy deck mentions AI and every vendor promises “intelligence” in their service.

Executives who can fluently explain the nuances of PSD2/Open Banking compliance, TPP authorization flows, and consent architecture find themselves nodding along to AI presentations they only half-understand.

The result is fragmented pilots, inflated expectations, and boards asking the same quiet question:

what does AI actually change here, and what does it not?

What AI actually Is in this context?

Once you strip away the marketing language, AI in open banking is remarkably straightforward. It is a prediction machine that operates at the scale and speed of the data now flowing through open banking APIs. That single idea is fundamentally a prediction engine operating at scale.

It looks at patterns in data, makes inferences, and produces outputs: a score, a decision, a recommendation, a generated response. That’s the core mechanism. Everything else is architecture around that mechanism.

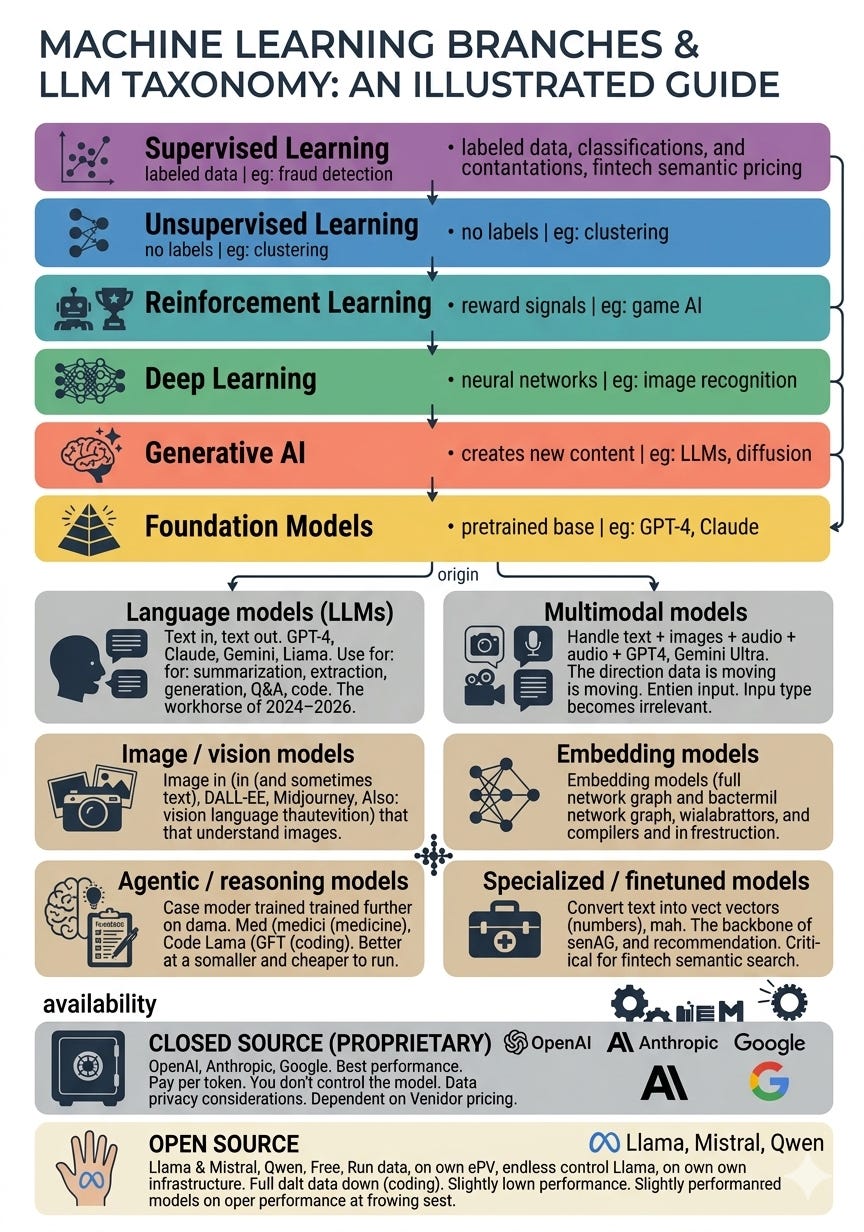

The terminology you’re hearing most often maps to roughly :

Machine learning is the statistical engine that finds patterns in historical data. In Open Banking, this is the engine behind transaction categorisation, credit scoring, affordability assessment, and churn prediction.

Deep Learning is a subset of ML that handles high-dimensional, unstructured data through layered neural networks. Its primary Open Banking applications are fraud detection and anomaly detection, where the signal is subtle and the data is high-volume.

Natural language processing (NLP) is the AI that understands and interprets human language. In Open Banking, NLP classifies documents, extracts entities from bank statements, screens for AML red flags in text-based data, and powers the input layer for any product that accepts user queries in plain language.

Large language models (LLMs) are a specific type of model trained on text, capable of understanding and generating language (the technology behind tools like ChatGPT). This is what makes financial summaries, coaching responses, and contextual explanations possible at scale.

Agents are AI systems given tools and instructions to complete multi-step tasks autonomously. In Open Banking, early agent applications include automated account switching workflows, financial data reconciliation, and the beginnings of personalised financial planning.

MCP is standard interface that lets AI agents call your APIs in a structured, reasoning compatible way

Inference at the pipe is running ML/AI models at the point of data transit ( enriching, scoring, or deciding before data reaches the application layer).

RAG (Retrieval-Augmented Generation) is a method of grounding language models in specific data sources so they don’t hallucinate facts.

You don’t need to understand how these work internally. You need to understand what they’re good for and where they break down.

if you want to deep dive into more AI terminology, explore these below:

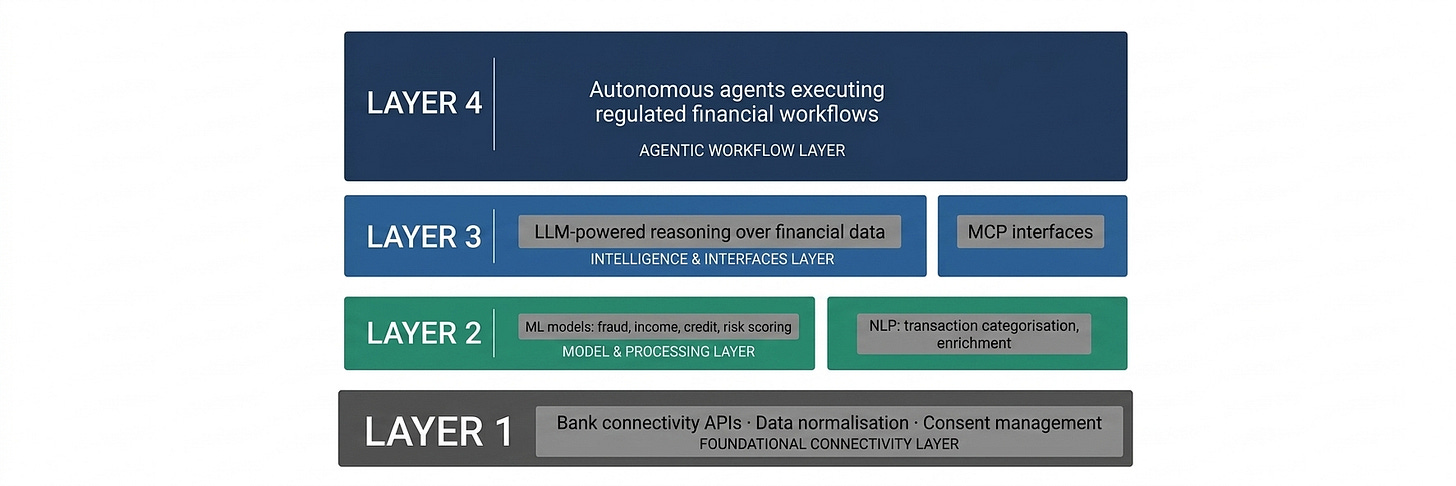

Where AI sits in the stack?

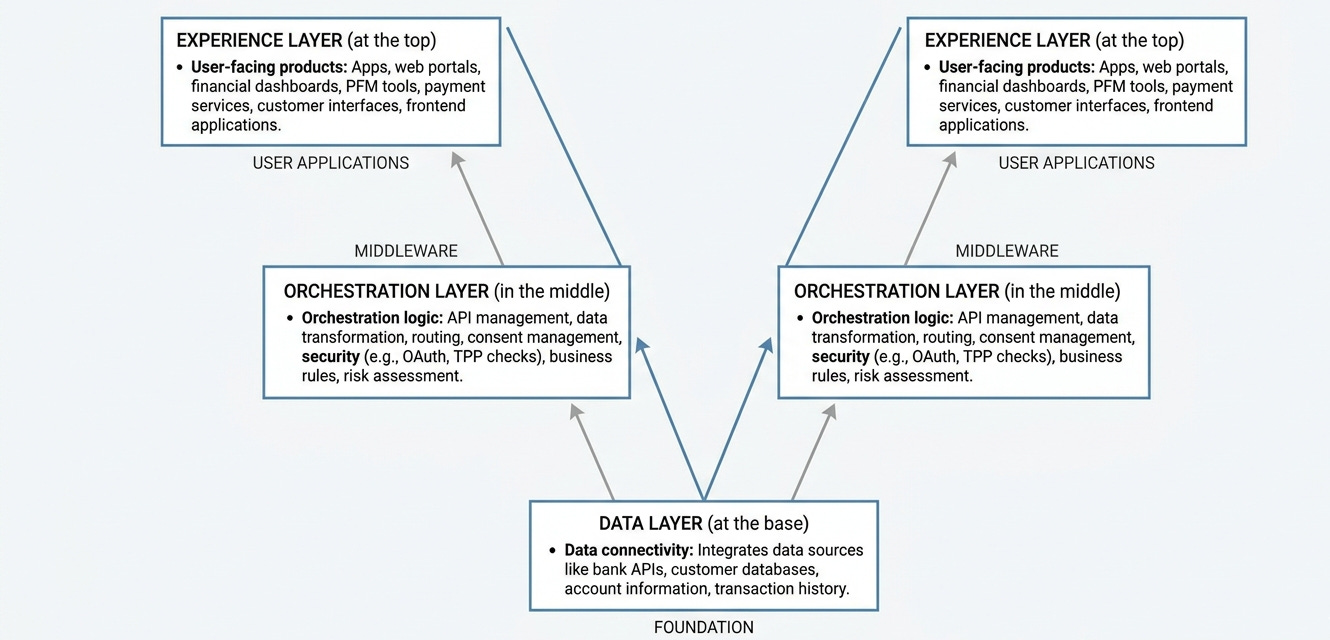

Open Banking’s architecture has layers:

Data Layer : data connectivity at the base

Orchestration Layer : orchestration logic in the middle

Experience Layer : user-facing products at the top

AI doesn’t replace any of these layers, rather it amplifies them.

Data layer: AI performs enrichment and interpretation. Raw transaction data is messy, merchant names are inconsistent, categories are ambiguous, cash flows are noisy. AI converts this raw feed into structured, meaningful signal. This is where most of the unsexy but highest-value work happens.

Orchestration layer: AI enables intelligent decisioning. Instead of rules such as “if income is above X and debt ratio is below Y, approve”, AI builds probabilistic models that weigh dozens of variables simultaneously and update as new data arrives. This is where credit underwriting, affordability assessment, and fraud detection are being quietly transformed.

Experience layer: AI enables personalization and language. Knowing a customer’s financial behavior is one thing; communicating with them in a way that’s contextually relevant is another. LLMs make it possible to generate explanations, summaries, nudges, and advice that feel individual.

The insight here is positional: AI is most powerful when it’s embedded in the layer where friction currently lives, not bolted on top as a feature.

Five capabilities of AI that actually matter in Open Banking.

Rather than chasing every AI use case, executives are better served by understanding five fundamental capabilities and asking where each applies in their stack.

Pattern recognition at scale

AI can detect signals in data that no human analyst would find. In Open Banking, this means

finding creditworthy customers who look risky by traditional metrics.

flagging unusual behavioral patterns before fraud occurs.

dynamic pricing of lending products based on real-time cash-flow health rather than static credit scores.

Data synthesis

Open banking data is messy and incomplete by design (customers share only what they choose). AI’s strength is stitching partial views into full pictures :

Identifying that a customer’s “groceries” spend is actually split across four apps

Small businesses have two hidden overdraft facilities.

This synthesis is where the economic value compounds.

Dynamic decisioning

Traditional routine decisions required a rule book. They’re written at a point in time and maintained manually. AI models learn from new data continuously :

Decisions improve with every new permissioned data point over time without human intervention.

For risk and compliance teams the model does not replace policy, it operationalizes it at volume.

Language understanding and generation

This is where LLMs change the product surface. Once a system understands a customer’s financial rhythm :

LLMs make it possible to do something useful with that context in plain language.

Financial summaries, spending insights, debt explanations are no longer content that needs to be written and templated. They can be generated, contextually, at the moment of need.

The output is still grounded in the same open banking data, only the presentation layer changes.

Autonomous task completion

Agents represent the emerging frontier. AI systems that can take a goal :

find the best switching offer for this customer

reconcile these payment discrepancies

This is early, but the direction is clear. The orchestration burden that sits inside operations teams today is AI shaped work.

What AI Will Not Solve

AI does not manufacture trust : AI cannot create trust where none exists. Customers still decide whether to share data based on brand perception, regulatory comfort, and past experience. Open Banking’s adoption challenge is fundamentally about consumer confidence in data sharing. No model improves that.

AI does not fix poor data quality : A model trained on incomplete, inconsistent, or biased transaction data will produce incomplete, inconsistent, or biased outputs. If open banking adoption is skewed toward certain demographics, the predictions will be skewed. It is a product and reputation risk that executives must own.

AI does not replace judgment in novel situations : Models are trained on what has happened. In a sudden regulatory change, an economic/market shock with no precedent, an unprecedented fraud pattern, AI offers weak signals. At best it will still require human judgement and rapid retraining loops.

AI cannot replace regulatory accountability: The model can explain its confidence level, it cannot sign the compliance attestation until now. In credit, in compliance, in customer-facing decisions, when a model recommends a lending decision or flags fraud, the bank remains liable. You need to have answers to key regulatory questions your AI strategy :

Explainability: Can your AI tell a regulator exactly why it denied a loan?

Auditability: Is every agent led transaction logged in a way that survives a compliance sweep?

Governance: Is your “AI Agent” acting within the user’s specific “Dynamic Consent” ?

It’s the quiet work of making existing data more useful, existing decisions more accurate, and existing operations more scalable. The executives who treat AI as infrastructure rather than innovation theater will build the durable advantages.

This series will continue to talk about AI across specific Open Banking usecases. But the frame stays consistent throughout:

what does this actually do, where does it actually fit, and what does it actually change?